Plus, receive recommendations and exclusive offers on all of your favorite books and authors from Simon & Schuster.

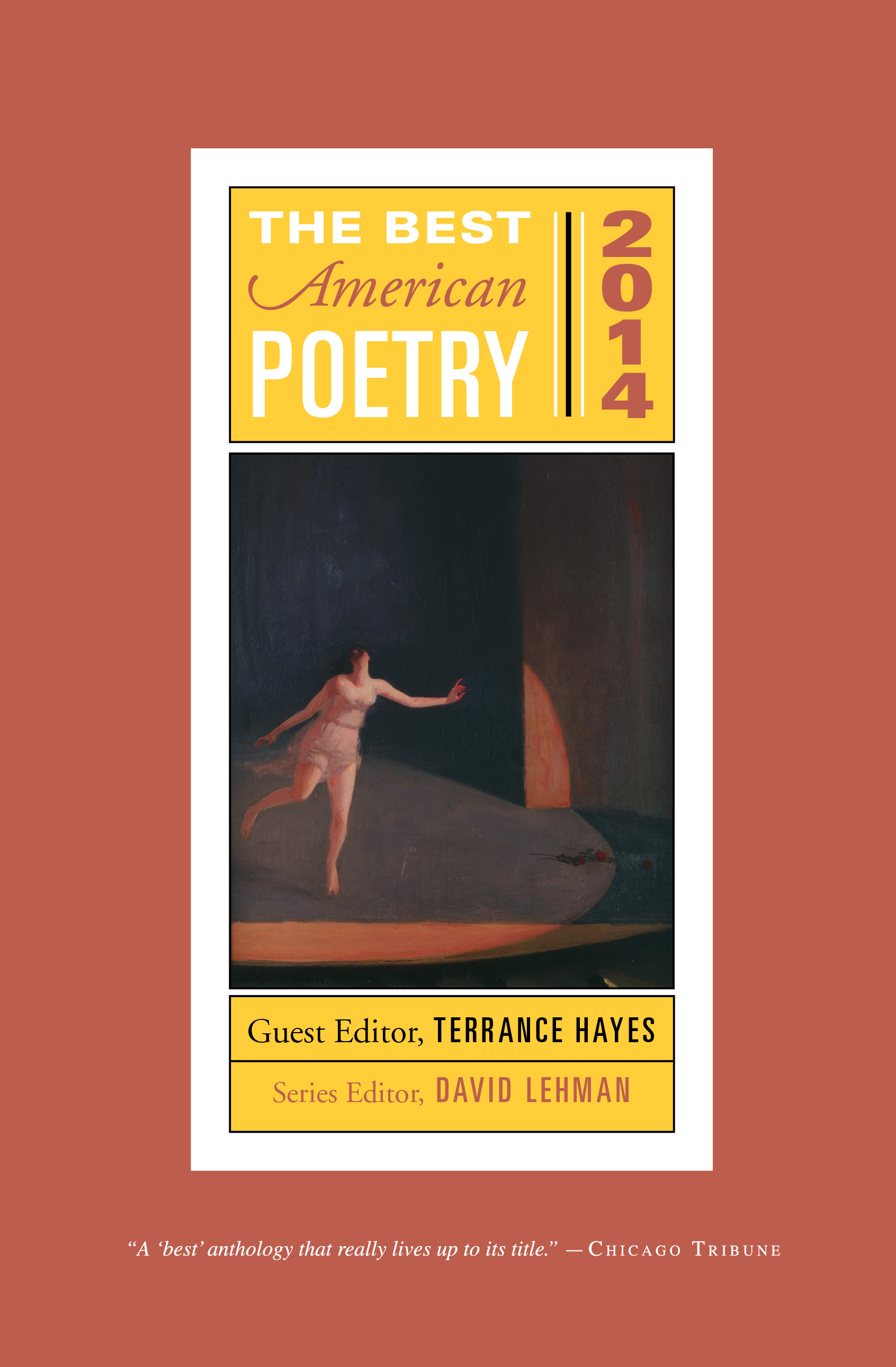

The Best American Poetry 2014

Part of The Best American Poetry series

Edited by David Lehman and Terrance Hayes

Table of Contents

About The Book

National Book Award–winning poet Terrance Hayes selects the poems for the 2014 edition of The Best American Poetry, “a ‘best’ anthology that really lives up to its title” (Chicago Tribune).

The first book of poetry that Terrance Hayes ever bought was the 1990 edition of The Best American Poetry, edited by Jorie Graham. Hayes was then an undergrad at a small South Carolina college. He has since published four highly honored books of poetry, is a professor of poetry at the University of Pittsburgh, has appeared multiple times in the series, and is one of today’s most decorated poets. His brazen, restless poems capture the diversity of American culture with singular artistry, grappling with facile assumptions about identity and the complex repercussions of race history in this country.

Always eagerly anticipated, the 2014 volume of The Best American Poetry begins with David Lehman’s “state-of-the-art” foreword followed by an inspired introduction from Terrance Hayes on his picks for the best American poems of the past year. Following the poems is the apparatus for which the series has won acclaim: notes from the poets about the writing of their poems.

The first book of poetry that Terrance Hayes ever bought was the 1990 edition of The Best American Poetry, edited by Jorie Graham. Hayes was then an undergrad at a small South Carolina college. He has since published four highly honored books of poetry, is a professor of poetry at the University of Pittsburgh, has appeared multiple times in the series, and is one of today’s most decorated poets. His brazen, restless poems capture the diversity of American culture with singular artistry, grappling with facile assumptions about identity and the complex repercussions of race history in this country.

Always eagerly anticipated, the 2014 volume of The Best American Poetry begins with David Lehman’s “state-of-the-art” foreword followed by an inspired introduction from Terrance Hayes on his picks for the best American poems of the past year. Following the poems is the apparatus for which the series has won acclaim: notes from the poets about the writing of their poems.

Excerpt

The Best American Poetry 2014 FOREWORD by David Lehman

Maybe I dreamed it. Don Draper sat sipping Canadian Club from a coffee mug on Craig Ferguson’s late-night talk show. “Are you on Twitter?” the host asks. “No,” Draper says. “I don’t”—and here he pauses before pronouncing the distasteful verb—“tweet.” Next question. “Do you read a lot of poetry?” The ad agency’s creative director looks skeptical. Though the hero of Mad Men is seen reading Dante’s Inferno in one season of Matthew Weiner’s show and heard reciting Frank O’Hara in another, the question seems to come from left field. “Poetry isn’t really celebrated any more in our culture,” Don says, to which the other retorts, “It can be—if you can write in units of 140 keystrokes.” Commercial break.

The laugh line reveals a shrewd insight into the subject of “poetry in the digital age,” a panel-discussion perennial. The panelists agree that text messaging and Internet blogs will be seen to have exercised some sort of influence on the practice of poetry, whether on the method of composition or on the style and surface of the writing. And surely we may expect the same of a wildly popular social medium with a formal requirement as stringent as the 140-character limit. (To someone with a streak of mathematical mysticism, the relation of that number to the number of lines in a sonnet is a thing of beauty.) What Twitter offers is ultimate immediacy expressed with ultimate concision. “Whatever else Twitter is, it’s a literary form,” says the novelist Kathryn Schulz, who explains how easy it was for her to get addicted to “a genre in which you try to say an informative thing in an interesting way while abiding by its constraint (those famous 140 characters). For people who love that kind of challenge—and it’s easy to see why writers might be overrepresented among them—Twitter has the same allure as gaming.” True, the hard-to-shake habit caused its share of problems. Schulz reports a huge “distractibility increase” and other disturbing symptoms: “I have felt my mind get divided into tweet-size chunks.” Nevertheless there is a reason that she got hooked on this “wide-ranging, intellectually stimulating, big-hearted, super fun” activity.1 When, in an early episode of the Netflix production of House of Cards, one Washington journalist disparages a rival as a “Twitter twat,” you know the word has arrived, and the language itself has changed to accommodate it. There are new terms (“hashtag”), acronyms (“ikr” in Detroit means “I know right?”), shorthand (“suttin” is “something” in Boston).2 Television producers love it (“Keep those tweets coming!”). So does Wall Street: when Twitter went public in 2013, the IPO came off without a hitch, and the stock climbed with the velocity of an over-caffeinated momentum investor eager to turn a quick profit.

The desire to make a friend of the new technology is understandable, though it obliges us to overlook some major flaws: the Internet is hell on lining, spacing, italics; line breaks and indentation are often obscured in electronic transmission. The integrity of the poetic line can be a serious casualty. Still, it is fruitless to quarrel with the actuality of change, and difficult to resist it profitably—except, perhaps, in private, where we may revel in our physical books and even, if we like, write with pen or pencil on graph paper or type our thoughts with the Smith-Corona manual to which we have a sentimental attachment. One room in the fine “Drawn to Language” exhibit at the University of Southern California’s Fisher Art Museum in September 2013 was devoted to Susan Silton’s site-specific installation of a circle of tables on which sat ten manual typewriters of different makes, models, sizes, and decades. It was moving to behold the machines not only as objects of nostalgia in an attractive arrangement but as metonymies of the experience of writing in the twentieth century—and as invitations to sit down and hunt and peck away to your heart’s content. Seeing the typewriters in that room I felt as I do when the talk touches on the acquisition of an author’s papers by a university library. It’s odd to be a member of the last generation to have “papers” in this archival and material sense. Odd for an era to slip into a museum while you watch.

You may say—I have heard the argument—that the one-minute poem is not far off. Twitter’s 140-keystroke constraint—together with the value placed on being “up to speed”—brings the clock into the game. Poetry, a byte-size kind of poetry, has been, or soon will be, a benefit of attention deficit disorder. (This statement, or prediction, is not necessarily or not always made in disparagement.) Unlike the telephone, the instruments of social media rely on the written, not the spoken word, and it will be interesting to see what happens when the values of hip-hop lyricists and spoken-word poets, for whom the performative aspects of the art are paramount, tangle with the values of concision, bite, and wit consistent with the rules of the Twitter feed. On the other hand, it is conceivable that the sentence I have just composed will be, for all intents and purposes, anachronistic in a couple of years or less. Among my favorite oxymorons is “ancient computer,” applied to my own desktop.3

* * *

In his famous and famously controversial Rede Lecture at Cambridge University in 1959, the English novelist C. P. Snow addressed the widening chasm between the two dominant strains in our culture.4 There were the humanists on the one side. On the other were the scientists and applied scientists, the agents of technological change. And “a gulf of mutual incomprehension” separated them. Though Snow endeavored to appear evenhanded, it became apparent that he favored the sciences—he opted, in his terms, for the fact rather than the myth. The scientists “have the future in their bones”—a future that will nourish the hungry, clothe the masses, reduce the risk of infant mortality, cure ailments, and prolong life. And “the traditional culture responds by wishing the future did not exist.”

The Rede Lecture came in the wake of the scare set off by the Soviet Union’s launch of Sputnik in October 1957. There was widespread fear that we in the West, and particularly we in the United States, were in danger of falling behind the Russians in the race for space, itself a metaphor for the scientific control of the future. For this reason among others, Snow’s lecture was extraordinarily successful. Introducing a phrase into common parlance, “The Two Cultures” reached great numbers of readers and helped shape a climate friendly to science at the expense of the traditional components of a liberal education. Much in that lecture infuriated the folks on the humanist side of the divide.5 Snow wrote as though humanistic values were possible without humanistic studies. In literature he saw not a corrective or a criticism of life but a threat. He interpreted George Orwell’s 1984 as “the strongest possible wish that the future should not exist” rather than as a warning against the authoritarian impulses of the modern state coupled with its sophistication of surveillance. Snow founded his argument on the unexamined assumption that scientists, in thrall to the truth, can be counted on to do the right thing—an assumption that the history of munitions would explode even if we could all agree on what “the right thing” is. For Snow, who had been knighted and would be granted a life peerage, the future was bound to be an improvement on the past, and the change would be entirely attributable to the people in the white coats in the laboratory. Generalizing from the reactionary political tendencies of certain famous modern writers, Snow floated the suggestion that they—and by implication those who read them—managed to “bring Auschwitz that much nearer.” Looking back at the Rede Lecture five years later, Snow saw no reason to modify the view that intellectuals were natural Luddites, prone to “talk about a pre-Industrial Eden” that never was. They ignored the simple truth that the historian J. H. Plumb stated: “No one in his senses would choose to have been born in a previous age unless he could be certain that he would have been born into a prosperous family, that he would have enjoyed extremely good health, and that he could have accepted stoically the death of the majority of his children.” In short, according to Snow, the humanists were content to dwell in a “pretty-pretty past.”

In 1962 F. R. Leavis, then perhaps the most influential literary critic at Cambridge, denounced Snow’s thesis with such vitriol and contempt that he may have done the humanist side more harm than good. “Snow exposes complacently a complete ignorance,” Leavis said in the Richmond Lecture, and “is as intellectually undistinguished as it is possible to be.” Yet, Leavis added, Snow writes in a “tone of which one can say that, while only genius could justify it, one cannot readily think of genius adopting it.”6 Reread today, the Richmond Lecture may be a classic of invective inviting close study. As rhetoric it was devastating. But as a document in a conflict of ideas, the Richmond Lecture left much to be desired. Leavis did not adequately address the charges that Snow leveled at literature and the arts on social and moral grounds.7 The scandal in personalities, the shrillness of tone, eclipsed the subject of the debate, which got fought out in the letters column of the literary press and was all the talk in the senior common rooms and faculty lounges of the English-speaking world.

The controversy ignited by a pair of dueling lectures at Cambridge deserves another look now not only because fifty years have passed and we can better judge what has happened in the intervening period but because more than ever the humanities today stand in need of defense. In universities and liberal arts colleges, these are hard times for the study of ideas. In 2013, front page articles in The New York Times and The Wall Street Journal screamed about the crisis in higher education especially in humanist fields: shrinking enrollments at liberal arts colleges; the shutting down of entire college departments; the elimination of courses and requirements once considered vital. The host of “worrisome long-trends” included “a national decline in the number of graduating high-school seniors, a swarm of technologies driving down costs and profit margins, rising student debt, a soft job market for college graduates and stagnant household incomes.”8 Is that all? No, and it isn’t everything. There has also been a spate of op-ed columns suggesting that students would be wise to save their money, study something that can lead to gainful employment, and forget about majoring in modern dance, art history, philosophy, sociology, theology, or English unless they are independently wealthy.

The cornerstones of the humanities, English and history, have taken a beating. At Yale, English was the most popular major in 1972–73. It did not make the top five in 2012–13. Twenty-one years ago, 216 Yale undergraduates majored in history; less than half that number picked the field last year.9 Harvard—where English majors dwindled from 36 percent of the student body in 1954 to 20 percent in 2012—has issued a report on the precipitous drop. Russell A. Berman of Stanford, in a piece in The Chronicle of Higher Education ominously entitled “Humanist: Heal Thyself,” observed that “the marginalization of the great works of the erstwhile canon has impoverished the humanities,” and that the Harvard report came to this important conclusion. But he noted, too, that it stopped short of calling for a great-books list of required readings. My heart sinks when I read such a piece and arrive at a paragraph in which the topic sentence is, “Clearly majoring in the humanities has long been an anomaly for American undergraduates.”10 Or is such a sentence—constructed as if to sound value-neutral and judgment-free in the proper scientific manner—part of the problem? The ability of an educated populace to read critically, to write clearly, to think coherently, and to retain knowledge—even the ability to grasp the basic rules of grammar and diction—seems to be declining at a pace consonant with the rise of the Internet search engine and the autocorrect function in computer programs.

Not merely the cost but the value of a liberal arts education has come into doubt. The humanists find themselves in a bind. Consider the plight of the English department. “The folly of studying, say, English Lit has become something of an Internet cliché—the stuff of sneering ‘Worst Majors’ listicles that seem always to be sponsored by personal-finance websites,” Thomas Frank writes in Harper’s.11 There is a new philistinism afoot, and the daunting price tag of college or graduate education adds an extra wrinkle to an argument of ferocious intensity. “The study of literature has traditionally been felt to have a unique effectiveness in opening the mind and illuminating it, in purging the mind of prejudices and received ideas, in making the mind free and active,” Lionel Trilling wrote at the time of the Leavis–Snow controversy. “The classic defense of literary study holds that, from the effect which the study of literature has upon the private sentiments of a student, there results, or can be made to result, an improvement in the intelligence, and especially the intelligence as it touches the moral life.”12 It is vastly more difficult today to mount such a defense after three or more decades of sustained assault on canons of judgment, the idea of greatness, the related idea of genius, and the whole vast cavalcade of Western civilization.13 Heather Mac Donald writes more in sorrow than in anger that the once-proud English department at UCLA—which even lately could boast of being home to more undergraduate majors than any other department in the nation—has dismantled its core, doing away with the formerly obligatory four courses in Chaucer, Shakespeare, and Milton. You can now satisfy the requirements of an English major with “alternative rubrics of gender, sexuality, race, and class.” The coup, as Mac Donald terms it, took place in 2011 and is but one event in a pattern of academic changes that would replace a theory of education based on a “constant, sophisticated dialogue between past and present” with a consumer mind-set based on “narcissism, an obsession with victimhood, and a relentless determination to reduce the stunning complexity of the past to the shallow categories of identity and class politics. Sitting atop an entire civilization of aesthetic wonders, the contemporary academic wants only to study oppression, preferably his or her own, defined reductively according to gonads and melanin.”14

In the antagonism between science and the humanities, it may now be said that C. P. Snow in “The Two Cultures” was certainly right in one particular. Technology in our culture has routed the humanities. Everyone wants the latest app, the best device, the slickest new gadget. Put on the defensive, spokespersons for the humanities have failed to make an effective case for their fields of study. There have been efforts to promote the digital humanities, it being understood that the adjective “digital” is what rescues “humanities” in the phrase. Has the faculty thrown in the towel too soon? Have literature departments and libraries welcomed the end of the book with unseemly haste? Have the conservators of culture embraced the acceleration of change that may endanger the study of the literary humanities as if—like the clock face, cursive script, and the rotary phone—it, too, can be effectively consigned to the ash heap of the analog era?

There is some resistance to the tyranny of technology, the ruthlessness of the new digital media. And in the incipient resistance, there is the resort to culture as we traditionally knew it—the poem on the printed page, the picture in the gallery, the concerto in the symphony hall. “There is no greater bulwark against the twittering acceleration of American consciousness than the encounter with a work of art, and the experience of a text or an image,” Leon Wieseltier told the graduating class at Brandeis University in May 2013. Wieseltier, the longtime literary editor of The New Republic, feels the situation is dire. “In the digital universe, knowledge is reduced to the status of information.” In truth, however, “knowledge can be acquired only over time and only by method. And the devices that we carry like addicts in our hands are disfiguring our mental lives.” Let us not be so quick to jettison the monuments of unaging intellect. “There is no task more urgent in American intellectual life at this hour than to offer some resistance to the twin imperialisms of science and technology.”15

* * *

One thing you can count on is that people will keep writing as they adjust from one medium to another, analog to digital, paper to computer monitor. Upon the appearance of the 2004 edition of The Best American Poetry (ed. Lyn Hejinian), David Orr wrote that the series stands for “the idea of poetry as a community activity. ‘People are writing poems!’ each volume cries. ‘You, too, could write a poem!’ It’s an appealingly democratic pose, and it has always been the genuinely ‘best’ thing about the Best American series.”16 Is everyone a poet?17 It was Freud who laid the intellectual foundations for the idea. He argued that each of us is a poet when dreaming or making wisecracks or even when making slips of the tongue or pen. If daydreaming is a passive form of creative writing, it follows that the unconscious to which we all have access is the content provider, and what is left to learn is technique. It took the advent of creative writing as an academic field to institutionalize what might be a natural tendency in American democracy. In the proliferation of competent poems, poems that meet a certain standard of artistic finish but may lack staying power, I cannot see much harm except to note one inevitable consequence, which is that of inflation. In economics, inflation takes the form of a devaluation of currency. In poetry, inflation lessens the value that the culture attaches to any individual poem. But this is far from a new development. Byron in a journal entry in 1821 or 1822 captured the economic model with his customary brio: “there are more poets (soi-disant) than ever there were, and proportionally less poetry.”18

Another thing you can count on: at seemingly regular intervals an article will appear in a wide-circulation periodical declaring—as if it hasn’t been said often before—that poetry is finished, kaput, dead, and what are they doing with the corpse? Back in 1888, Walt Whitman read an article forecasting the demise of poetry in fifty years “owing to the special tendency to science and to its all-devouring force.” (Whitman’s comment: “I anticipate the very contrary. Only a firmer, vastly broader, new area begins to exist—nay, is already formed—to which the poetic genius must emigrate.”)19 In his introduction to The Sacred Wood (1920), T. S. Eliot ridiculed the kind of argument encountered in fashionable London circles of the day. Edmund Gosse had written in the Sunday Times: “Poetry is not a formula which a thousand flappers and hobbledehoys ought to be able to master in a week without any training, and the mere fact that it seems to be now practiced with such universal ease is enough to prove that something has gone amiss with our standards.” Here is Eliot’s paraphrase of the Gosse argument: “If too much bad verse is published in London, it does not occur to us to raise our standards, to do anything to educate the poetasters; the remedy is, Kill them off.” (Eliot also asks: “is it wholly the fault of the younger generation that it is aware of no authority that it must respect?”)20 On occasion the death-of-poetry genre can produce something useful; Edmund Wilson’s essay “Is Verse a Dying Technique?” comes to mind. But today you are more likely to find “Poetry and Me: An Elegy.”

In its July 2013 issue, Harper’s published a typical example of the genre, Mark Edmundson’s “Poetry Slam: Or, The Decline of American Verse.”21 The piece by an older academic bewailing the state of something he calls “mainstream American poetry” and praising the poetry he loved as a youth is embarrassing for what it reveals about the author, who is out of touch with the poetry in circulation. And then “mainstream American poetry” is poor turf to stand on: Would you offer a course with that label? Would anyone want to fit into such a category? The professor’s chief complaint appears to be that “there’s no end of poetry being written and published out there,” and though he knows he shouldn’t generalize, he will do just that and say that today’s poets lack ambition—“the poets who now get the balance of public attention and esteem are casting unambitious spells,” which is at least a grudging acknowledgment, if only by virtue of the metaphor, that our poets remain magicians.

When such a piece runs, the magazine subsequently prints a handful of the letters the offending article has provoked. Of the three letters that Harper’s saw fit to print in its September 2013 issue, one writer was vexed that Edmundson had focused “almost exclusively” on white males. A second thought it a shame that the author had overlooked the work of hip-hop lyricists (such as Kendrick Lamar and Nas). The third letter was written by Harvard Professor Stephen Burt, an assiduous critic and reader. He pointed out that there is “something bullying” in the call for “public” poetry. Whose public, he asked: “A public poem, in Edmundson’s view, might be an interest-group poem whose collective has a flag.” Attacks on contemporary American poetry such as Edmundson’s “have been made for centuries” and are best seen as “screeds [that] create an opportunity for those of us who read a lot of poetry to recommend individual poets as we come to poetry-in-general’s defense.”22

Each year in The Best American Poetry we seize that opportunity and ask a distinguished poet to glean the harvest of poems and identify the ones he or she thinks best. Terrance Hayes has undertaken the task with vigor and inventiveness. A native of South Carolina, Hayes went to Coker College on a basketball scholarship, studied the visual arts, and wrote poetry on the side. An instructor directed him to the MFA poetry program at the University of Pittsburgh, where he studied with Toi Derricotte and joined Cave Canem, the organization that has done so much to nourish the remarkable generation of African American poets on the scene today. Terrance won the 2010 National Book Award for his book Lighthead (Penguin). His poems—which have appeared seven times in The Best American Poetry—reflect a deep interest in matters of masculinity, sexuality, and race; a flair for narrative; and a love of verbal games as the key to ad hoc forms and procedures. I was thrilled when Hayes told me that the first book of poems he ever acquired was the 1990 edition of The Best American Poetry, Jorie Graham’s volume, and that he owns all the books in the series. When I asked him to read for the 2014 book, I had in front of me the winter 2010–11 issue of Ploughshares, which Hayes edited. In his introduction, he wrote about a notional three-story museum, the “Sentenced Museum,” which resembles an inverted pyramid with the literature of self-reflection on the ground floor, the language of witness one flight up, and a host of “tangential parlors, wings and galleries” on the third and largest floor. I remember reading the issue and thinking, “as an editor, he’s a natural.”

As ever, this year’s volume includes our elaborate back-of-the-book apparatus. To the value of the comments the poets make on the work chosen for this book, the poets themselves attest. In the 2013 edition, Dorianne Laux comments on her “Song,” “Death permeates the poem, which wasn’t apparent to me until I was asked to write this paragraph.”

* * *

It used to be the death of God that got all the attention—God whose decomposing corpse made the big stink. Was it, in the end, Nietzsche, Freud, Time magazine, or the masses (who preferred, in the end, other opiates) that did Him in? I can’t say, but I take solace in knowing that there are, besides myself, other holdouts refusing to suspend their belief. Meanwhile, the subject has receded to the terrorism and fundamentalism pages of your newspaper, and the focus has long since shifted to literature. The death of the novel worried all-star committees for years. There was a split decision that satisfied no one, and now, with Updike dead and Roth retired, a new consensus is starting to form around the notion that the TV serial as exemplified by The Sopranos, Mad Men, Breaking Bad, House of Cards, and Homeland has supplanted not only the novel but the movie as a mass entertainment form—one that can aspire to be both wildly popular and notably artistic, as the novel was at its best. The past tense in that last clause makes me sad, though I have seen the future and it is even more enthralling than Galsworthy’s Forsyte Saga given the Masterpiece Theatre treatment with Damian Lewis as Soames.

As to poetry, is it dead, does it matter, is there too much of it, does anyone anywhere buy books of poetry? The discussion is fraught with anxiety and perhaps that implies a love of poetry, and a longing for it, and a fear that we may be in danger of losing it if we do not take care to promote it, teach it well, and help it reach the reader whose life depends on it. Will magazine editors continue to fall for a pitch lamenting that poetry has become a “small-time game,” that it is “too hermetic,” or “programmatically obscure,” lacking ambition and public spiritedness? The lack of originality is no bar. Think of how many issues of finance magazines are identical in their contents year after year. Retire at sixty-five. Insider tips from the pros. What to do about bonds in 2014 “and beyond.” Why it makes dollars and sense to “ditch cable.” Or consider the general audience magazine, editors of which will not soon tire of running articles that contend that a woman today either can or cannot “have it all.” I am so sure that death-of-poetry pronouncements will continue to be made that I am tempted to assign the task as a writing exercise. It’s an evergreen.

1. Kathryn Schulz, “Seduced by Twitter,” The Week, December 27, 2013, pp. 40–41.

2. Katy Steinmetz, “The Linguist’s Mother Lode,” Time, September 9, 2013, pp. 56–57. Jacob Eisenstein, a computational linguist at Georgia Tech, is quoted: “Social media has taken the informal peer-to-peer interaction that might have been almost exclusively spoken and put it in a written form. The result of that is a burst of creativity.” The assumption here is that the new is necessarily “creative” in the honorific sense.

3. “Even the best computer will seem positively geriatric by its fifth birthday.” Geoffrey A. Fowler, “Mac Pro Is a Lamborghini, but Who Drives That Fast?” The Wall Street Journal, January 15, 2014, D1.

4. The 1959 Rede Lecture in four parts was published as The Two Cultures and the Scientific Revolution. An expanded version conjoining the lecture with Snow’s subsequent reflections (A Second Look) appeared from Cambridge University Press in 1964.

5. I take the term “humanist” to cover historians and philosophers, literary and cultural critics, music and art historians, professors of English or Romance Languages or comparative literature or East Asian studies, classicists, linguists, jurists and legal scholars, public intellectuals, authors and essayists, most psychologists, and a great many other academics across the board: very nearly everyone not committed professionally to a career in one of the sciences or in technology.

6. The Two Cultures? The Significance of C. P. Snow by F. R Leavis.

7. For more on the affair, and an especially sensitive and sympathetic reading of Leavis’s “relentlessly withering” attack on Snow, see Stefan Collini, “Leavis v. Snow: The Two-Cultures Bust-Up 50 Years On,” in The Guardian, August 16, 2013. http://www.theguardian.com/books/2013/aug/16/leavis-snow-two-cultures-bust

8. Douglas Belkin, “Private Colleges Squeezed,” The Wall Street Journal, November 10, 2013.

9. “Major Changes,” Yale Alumni Magazine, January/February 2014, p. 20.

10. Russell A. Berman, “Humanist: Heal Thyself,” The Chronicle of Higher Education, June 10, 2013. http://chronicle.com/blogs/conversation/2013/06/10/humanist-heal-thyself/

11. Thomas Frank, “Course Corrections,” Harper’s Magazine, October 2013, p. 10. The editorial writers of the New York Post begin a defense of the liberal arts with “the nightmare scenario for many parents of college students. Suzie comes home from her $50,000-a-year university to tell you this: ‘Mom and Dad, I’ve decided I want to major in early Renaissance poetry.’?” New York Post, February 22, 2014.

12. Lionel Trilling, “The Two Environments: Reflections on the Study of English,” in Beyond Culture (1965; rpt. New York: Harcourt Brace Jovanovich, 1978), p. 184. See also Trilling’s lucid account of the Leavis–Snow controversy in the same volume, pp. 126–54.

13. “In according the least legitimacy to the word ‘genius,’ one is considered to sign one’s resignation from all fields of knowledge,” Jacques Derrida said in 2003. The very noun, he said, “makes us squirm.” At the same time that academics banished the word, magazines such as Time and Esquire began to dumb it down, applying “genius” to all manner of folk, including fashion designers, corporate executives, performers, comedians, talk-show hosts, and even point guards who shoot too much (Allen Iverson, circa 2000). See Darrin M. McMahon, “Where Have All the Geniuses Gone?” in The Chronicle Review, October 21, 2013. http://chronicle.com/article/Where-Have-All-the-Geniuses/142353/

14. Heather Mac Donald, “The Humanities Have Forgotten Their Humanity,” The Wall Street Journal, January 3, 2014. http://online.wsj.com/news/articles/SB10001424052702304858104579264321265378790

15. Leon Wieseltier, “Perhaps Culture Is Now the Counterculture,” The New Republic, May 28, 2013. http://www.newrepublic.com/article/113299/leon-wieseltier-commencement-speech-brandeis-university-2013

16. David Orr, “The Best American Poetry 2004: You, Too, Could Write a Poem.” The New York Times Book Review, November 21, 2004.

17. Madeline Schwartzman, an adjunct professor of architecture at Barnard College, is stopping someone on the subway every day and asking the person to write a poem on the spot for “365 Day Subway: Poems by New Yorkers.” Heidi Mitchell, “Artist Solicits Poetry from Other Subterraneans,” The Wall Street Journal, February 1–2, 2014, A15.

18. Byron, “Detached Thoughts” (October 1821 to May 1822), in Byron’s Poetry, ed. Frank D. McConnell (W. W. Norton, 1978), p. 335.

19. Whitman in “A Backward Glance O’er Travel’d Roads” (1888), in Walt Whitman: A Critical Anthology, ed. Francis Murphy (Penguin, 1969), pp. 110–11.

20. Eliot, The Sacred Wood (1920; rpt. Methuen, 1960), p. xv.

21. Harper’s Magazine, July 2013, pp. 61–68.

22. Harper’s Magazine, September 2013, pp. 2–3.

Maybe I dreamed it. Don Draper sat sipping Canadian Club from a coffee mug on Craig Ferguson’s late-night talk show. “Are you on Twitter?” the host asks. “No,” Draper says. “I don’t”—and here he pauses before pronouncing the distasteful verb—“tweet.” Next question. “Do you read a lot of poetry?” The ad agency’s creative director looks skeptical. Though the hero of Mad Men is seen reading Dante’s Inferno in one season of Matthew Weiner’s show and heard reciting Frank O’Hara in another, the question seems to come from left field. “Poetry isn’t really celebrated any more in our culture,” Don says, to which the other retorts, “It can be—if you can write in units of 140 keystrokes.” Commercial break.

The laugh line reveals a shrewd insight into the subject of “poetry in the digital age,” a panel-discussion perennial. The panelists agree that text messaging and Internet blogs will be seen to have exercised some sort of influence on the practice of poetry, whether on the method of composition or on the style and surface of the writing. And surely we may expect the same of a wildly popular social medium with a formal requirement as stringent as the 140-character limit. (To someone with a streak of mathematical mysticism, the relation of that number to the number of lines in a sonnet is a thing of beauty.) What Twitter offers is ultimate immediacy expressed with ultimate concision. “Whatever else Twitter is, it’s a literary form,” says the novelist Kathryn Schulz, who explains how easy it was for her to get addicted to “a genre in which you try to say an informative thing in an interesting way while abiding by its constraint (those famous 140 characters). For people who love that kind of challenge—and it’s easy to see why writers might be overrepresented among them—Twitter has the same allure as gaming.” True, the hard-to-shake habit caused its share of problems. Schulz reports a huge “distractibility increase” and other disturbing symptoms: “I have felt my mind get divided into tweet-size chunks.” Nevertheless there is a reason that she got hooked on this “wide-ranging, intellectually stimulating, big-hearted, super fun” activity.1 When, in an early episode of the Netflix production of House of Cards, one Washington journalist disparages a rival as a “Twitter twat,” you know the word has arrived, and the language itself has changed to accommodate it. There are new terms (“hashtag”), acronyms (“ikr” in Detroit means “I know right?”), shorthand (“suttin” is “something” in Boston).2 Television producers love it (“Keep those tweets coming!”). So does Wall Street: when Twitter went public in 2013, the IPO came off without a hitch, and the stock climbed with the velocity of an over-caffeinated momentum investor eager to turn a quick profit.

The desire to make a friend of the new technology is understandable, though it obliges us to overlook some major flaws: the Internet is hell on lining, spacing, italics; line breaks and indentation are often obscured in electronic transmission. The integrity of the poetic line can be a serious casualty. Still, it is fruitless to quarrel with the actuality of change, and difficult to resist it profitably—except, perhaps, in private, where we may revel in our physical books and even, if we like, write with pen or pencil on graph paper or type our thoughts with the Smith-Corona manual to which we have a sentimental attachment. One room in the fine “Drawn to Language” exhibit at the University of Southern California’s Fisher Art Museum in September 2013 was devoted to Susan Silton’s site-specific installation of a circle of tables on which sat ten manual typewriters of different makes, models, sizes, and decades. It was moving to behold the machines not only as objects of nostalgia in an attractive arrangement but as metonymies of the experience of writing in the twentieth century—and as invitations to sit down and hunt and peck away to your heart’s content. Seeing the typewriters in that room I felt as I do when the talk touches on the acquisition of an author’s papers by a university library. It’s odd to be a member of the last generation to have “papers” in this archival and material sense. Odd for an era to slip into a museum while you watch.

You may say—I have heard the argument—that the one-minute poem is not far off. Twitter’s 140-keystroke constraint—together with the value placed on being “up to speed”—brings the clock into the game. Poetry, a byte-size kind of poetry, has been, or soon will be, a benefit of attention deficit disorder. (This statement, or prediction, is not necessarily or not always made in disparagement.) Unlike the telephone, the instruments of social media rely on the written, not the spoken word, and it will be interesting to see what happens when the values of hip-hop lyricists and spoken-word poets, for whom the performative aspects of the art are paramount, tangle with the values of concision, bite, and wit consistent with the rules of the Twitter feed. On the other hand, it is conceivable that the sentence I have just composed will be, for all intents and purposes, anachronistic in a couple of years or less. Among my favorite oxymorons is “ancient computer,” applied to my own desktop.3

* * *

In his famous and famously controversial Rede Lecture at Cambridge University in 1959, the English novelist C. P. Snow addressed the widening chasm between the two dominant strains in our culture.4 There were the humanists on the one side. On the other were the scientists and applied scientists, the agents of technological change. And “a gulf of mutual incomprehension” separated them. Though Snow endeavored to appear evenhanded, it became apparent that he favored the sciences—he opted, in his terms, for the fact rather than the myth. The scientists “have the future in their bones”—a future that will nourish the hungry, clothe the masses, reduce the risk of infant mortality, cure ailments, and prolong life. And “the traditional culture responds by wishing the future did not exist.”

The Rede Lecture came in the wake of the scare set off by the Soviet Union’s launch of Sputnik in October 1957. There was widespread fear that we in the West, and particularly we in the United States, were in danger of falling behind the Russians in the race for space, itself a metaphor for the scientific control of the future. For this reason among others, Snow’s lecture was extraordinarily successful. Introducing a phrase into common parlance, “The Two Cultures” reached great numbers of readers and helped shape a climate friendly to science at the expense of the traditional components of a liberal education. Much in that lecture infuriated the folks on the humanist side of the divide.5 Snow wrote as though humanistic values were possible without humanistic studies. In literature he saw not a corrective or a criticism of life but a threat. He interpreted George Orwell’s 1984 as “the strongest possible wish that the future should not exist” rather than as a warning against the authoritarian impulses of the modern state coupled with its sophistication of surveillance. Snow founded his argument on the unexamined assumption that scientists, in thrall to the truth, can be counted on to do the right thing—an assumption that the history of munitions would explode even if we could all agree on what “the right thing” is. For Snow, who had been knighted and would be granted a life peerage, the future was bound to be an improvement on the past, and the change would be entirely attributable to the people in the white coats in the laboratory. Generalizing from the reactionary political tendencies of certain famous modern writers, Snow floated the suggestion that they—and by implication those who read them—managed to “bring Auschwitz that much nearer.” Looking back at the Rede Lecture five years later, Snow saw no reason to modify the view that intellectuals were natural Luddites, prone to “talk about a pre-Industrial Eden” that never was. They ignored the simple truth that the historian J. H. Plumb stated: “No one in his senses would choose to have been born in a previous age unless he could be certain that he would have been born into a prosperous family, that he would have enjoyed extremely good health, and that he could have accepted stoically the death of the majority of his children.” In short, according to Snow, the humanists were content to dwell in a “pretty-pretty past.”

In 1962 F. R. Leavis, then perhaps the most influential literary critic at Cambridge, denounced Snow’s thesis with such vitriol and contempt that he may have done the humanist side more harm than good. “Snow exposes complacently a complete ignorance,” Leavis said in the Richmond Lecture, and “is as intellectually undistinguished as it is possible to be.” Yet, Leavis added, Snow writes in a “tone of which one can say that, while only genius could justify it, one cannot readily think of genius adopting it.”6 Reread today, the Richmond Lecture may be a classic of invective inviting close study. As rhetoric it was devastating. But as a document in a conflict of ideas, the Richmond Lecture left much to be desired. Leavis did not adequately address the charges that Snow leveled at literature and the arts on social and moral grounds.7 The scandal in personalities, the shrillness of tone, eclipsed the subject of the debate, which got fought out in the letters column of the literary press and was all the talk in the senior common rooms and faculty lounges of the English-speaking world.

The controversy ignited by a pair of dueling lectures at Cambridge deserves another look now not only because fifty years have passed and we can better judge what has happened in the intervening period but because more than ever the humanities today stand in need of defense. In universities and liberal arts colleges, these are hard times for the study of ideas. In 2013, front page articles in The New York Times and The Wall Street Journal screamed about the crisis in higher education especially in humanist fields: shrinking enrollments at liberal arts colleges; the shutting down of entire college departments; the elimination of courses and requirements once considered vital. The host of “worrisome long-trends” included “a national decline in the number of graduating high-school seniors, a swarm of technologies driving down costs and profit margins, rising student debt, a soft job market for college graduates and stagnant household incomes.”8 Is that all? No, and it isn’t everything. There has also been a spate of op-ed columns suggesting that students would be wise to save their money, study something that can lead to gainful employment, and forget about majoring in modern dance, art history, philosophy, sociology, theology, or English unless they are independently wealthy.

The cornerstones of the humanities, English and history, have taken a beating. At Yale, English was the most popular major in 1972–73. It did not make the top five in 2012–13. Twenty-one years ago, 216 Yale undergraduates majored in history; less than half that number picked the field last year.9 Harvard—where English majors dwindled from 36 percent of the student body in 1954 to 20 percent in 2012—has issued a report on the precipitous drop. Russell A. Berman of Stanford, in a piece in The Chronicle of Higher Education ominously entitled “Humanist: Heal Thyself,” observed that “the marginalization of the great works of the erstwhile canon has impoverished the humanities,” and that the Harvard report came to this important conclusion. But he noted, too, that it stopped short of calling for a great-books list of required readings. My heart sinks when I read such a piece and arrive at a paragraph in which the topic sentence is, “Clearly majoring in the humanities has long been an anomaly for American undergraduates.”10 Or is such a sentence—constructed as if to sound value-neutral and judgment-free in the proper scientific manner—part of the problem? The ability of an educated populace to read critically, to write clearly, to think coherently, and to retain knowledge—even the ability to grasp the basic rules of grammar and diction—seems to be declining at a pace consonant with the rise of the Internet search engine and the autocorrect function in computer programs.

Not merely the cost but the value of a liberal arts education has come into doubt. The humanists find themselves in a bind. Consider the plight of the English department. “The folly of studying, say, English Lit has become something of an Internet cliché—the stuff of sneering ‘Worst Majors’ listicles that seem always to be sponsored by personal-finance websites,” Thomas Frank writes in Harper’s.11 There is a new philistinism afoot, and the daunting price tag of college or graduate education adds an extra wrinkle to an argument of ferocious intensity. “The study of literature has traditionally been felt to have a unique effectiveness in opening the mind and illuminating it, in purging the mind of prejudices and received ideas, in making the mind free and active,” Lionel Trilling wrote at the time of the Leavis–Snow controversy. “The classic defense of literary study holds that, from the effect which the study of literature has upon the private sentiments of a student, there results, or can be made to result, an improvement in the intelligence, and especially the intelligence as it touches the moral life.”12 It is vastly more difficult today to mount such a defense after three or more decades of sustained assault on canons of judgment, the idea of greatness, the related idea of genius, and the whole vast cavalcade of Western civilization.13 Heather Mac Donald writes more in sorrow than in anger that the once-proud English department at UCLA—which even lately could boast of being home to more undergraduate majors than any other department in the nation—has dismantled its core, doing away with the formerly obligatory four courses in Chaucer, Shakespeare, and Milton. You can now satisfy the requirements of an English major with “alternative rubrics of gender, sexuality, race, and class.” The coup, as Mac Donald terms it, took place in 2011 and is but one event in a pattern of academic changes that would replace a theory of education based on a “constant, sophisticated dialogue between past and present” with a consumer mind-set based on “narcissism, an obsession with victimhood, and a relentless determination to reduce the stunning complexity of the past to the shallow categories of identity and class politics. Sitting atop an entire civilization of aesthetic wonders, the contemporary academic wants only to study oppression, preferably his or her own, defined reductively according to gonads and melanin.”14

In the antagonism between science and the humanities, it may now be said that C. P. Snow in “The Two Cultures” was certainly right in one particular. Technology in our culture has routed the humanities. Everyone wants the latest app, the best device, the slickest new gadget. Put on the defensive, spokespersons for the humanities have failed to make an effective case for their fields of study. There have been efforts to promote the digital humanities, it being understood that the adjective “digital” is what rescues “humanities” in the phrase. Has the faculty thrown in the towel too soon? Have literature departments and libraries welcomed the end of the book with unseemly haste? Have the conservators of culture embraced the acceleration of change that may endanger the study of the literary humanities as if—like the clock face, cursive script, and the rotary phone—it, too, can be effectively consigned to the ash heap of the analog era?

There is some resistance to the tyranny of technology, the ruthlessness of the new digital media. And in the incipient resistance, there is the resort to culture as we traditionally knew it—the poem on the printed page, the picture in the gallery, the concerto in the symphony hall. “There is no greater bulwark against the twittering acceleration of American consciousness than the encounter with a work of art, and the experience of a text or an image,” Leon Wieseltier told the graduating class at Brandeis University in May 2013. Wieseltier, the longtime literary editor of The New Republic, feels the situation is dire. “In the digital universe, knowledge is reduced to the status of information.” In truth, however, “knowledge can be acquired only over time and only by method. And the devices that we carry like addicts in our hands are disfiguring our mental lives.” Let us not be so quick to jettison the monuments of unaging intellect. “There is no task more urgent in American intellectual life at this hour than to offer some resistance to the twin imperialisms of science and technology.”15

* * *

One thing you can count on is that people will keep writing as they adjust from one medium to another, analog to digital, paper to computer monitor. Upon the appearance of the 2004 edition of The Best American Poetry (ed. Lyn Hejinian), David Orr wrote that the series stands for “the idea of poetry as a community activity. ‘People are writing poems!’ each volume cries. ‘You, too, could write a poem!’ It’s an appealingly democratic pose, and it has always been the genuinely ‘best’ thing about the Best American series.”16 Is everyone a poet?17 It was Freud who laid the intellectual foundations for the idea. He argued that each of us is a poet when dreaming or making wisecracks or even when making slips of the tongue or pen. If daydreaming is a passive form of creative writing, it follows that the unconscious to which we all have access is the content provider, and what is left to learn is technique. It took the advent of creative writing as an academic field to institutionalize what might be a natural tendency in American democracy. In the proliferation of competent poems, poems that meet a certain standard of artistic finish but may lack staying power, I cannot see much harm except to note one inevitable consequence, which is that of inflation. In economics, inflation takes the form of a devaluation of currency. In poetry, inflation lessens the value that the culture attaches to any individual poem. But this is far from a new development. Byron in a journal entry in 1821 or 1822 captured the economic model with his customary brio: “there are more poets (soi-disant) than ever there were, and proportionally less poetry.”18

Another thing you can count on: at seemingly regular intervals an article will appear in a wide-circulation periodical declaring—as if it hasn’t been said often before—that poetry is finished, kaput, dead, and what are they doing with the corpse? Back in 1888, Walt Whitman read an article forecasting the demise of poetry in fifty years “owing to the special tendency to science and to its all-devouring force.” (Whitman’s comment: “I anticipate the very contrary. Only a firmer, vastly broader, new area begins to exist—nay, is already formed—to which the poetic genius must emigrate.”)19 In his introduction to The Sacred Wood (1920), T. S. Eliot ridiculed the kind of argument encountered in fashionable London circles of the day. Edmund Gosse had written in the Sunday Times: “Poetry is not a formula which a thousand flappers and hobbledehoys ought to be able to master in a week without any training, and the mere fact that it seems to be now practiced with such universal ease is enough to prove that something has gone amiss with our standards.” Here is Eliot’s paraphrase of the Gosse argument: “If too much bad verse is published in London, it does not occur to us to raise our standards, to do anything to educate the poetasters; the remedy is, Kill them off.” (Eliot also asks: “is it wholly the fault of the younger generation that it is aware of no authority that it must respect?”)20 On occasion the death-of-poetry genre can produce something useful; Edmund Wilson’s essay “Is Verse a Dying Technique?” comes to mind. But today you are more likely to find “Poetry and Me: An Elegy.”

In its July 2013 issue, Harper’s published a typical example of the genre, Mark Edmundson’s “Poetry Slam: Or, The Decline of American Verse.”21 The piece by an older academic bewailing the state of something he calls “mainstream American poetry” and praising the poetry he loved as a youth is embarrassing for what it reveals about the author, who is out of touch with the poetry in circulation. And then “mainstream American poetry” is poor turf to stand on: Would you offer a course with that label? Would anyone want to fit into such a category? The professor’s chief complaint appears to be that “there’s no end of poetry being written and published out there,” and though he knows he shouldn’t generalize, he will do just that and say that today’s poets lack ambition—“the poets who now get the balance of public attention and esteem are casting unambitious spells,” which is at least a grudging acknowledgment, if only by virtue of the metaphor, that our poets remain magicians.

When such a piece runs, the magazine subsequently prints a handful of the letters the offending article has provoked. Of the three letters that Harper’s saw fit to print in its September 2013 issue, one writer was vexed that Edmundson had focused “almost exclusively” on white males. A second thought it a shame that the author had overlooked the work of hip-hop lyricists (such as Kendrick Lamar and Nas). The third letter was written by Harvard Professor Stephen Burt, an assiduous critic and reader. He pointed out that there is “something bullying” in the call for “public” poetry. Whose public, he asked: “A public poem, in Edmundson’s view, might be an interest-group poem whose collective has a flag.” Attacks on contemporary American poetry such as Edmundson’s “have been made for centuries” and are best seen as “screeds [that] create an opportunity for those of us who read a lot of poetry to recommend individual poets as we come to poetry-in-general’s defense.”22

Each year in The Best American Poetry we seize that opportunity and ask a distinguished poet to glean the harvest of poems and identify the ones he or she thinks best. Terrance Hayes has undertaken the task with vigor and inventiveness. A native of South Carolina, Hayes went to Coker College on a basketball scholarship, studied the visual arts, and wrote poetry on the side. An instructor directed him to the MFA poetry program at the University of Pittsburgh, where he studied with Toi Derricotte and joined Cave Canem, the organization that has done so much to nourish the remarkable generation of African American poets on the scene today. Terrance won the 2010 National Book Award for his book Lighthead (Penguin). His poems—which have appeared seven times in The Best American Poetry—reflect a deep interest in matters of masculinity, sexuality, and race; a flair for narrative; and a love of verbal games as the key to ad hoc forms and procedures. I was thrilled when Hayes told me that the first book of poems he ever acquired was the 1990 edition of The Best American Poetry, Jorie Graham’s volume, and that he owns all the books in the series. When I asked him to read for the 2014 book, I had in front of me the winter 2010–11 issue of Ploughshares, which Hayes edited. In his introduction, he wrote about a notional three-story museum, the “Sentenced Museum,” which resembles an inverted pyramid with the literature of self-reflection on the ground floor, the language of witness one flight up, and a host of “tangential parlors, wings and galleries” on the third and largest floor. I remember reading the issue and thinking, “as an editor, he’s a natural.”

As ever, this year’s volume includes our elaborate back-of-the-book apparatus. To the value of the comments the poets make on the work chosen for this book, the poets themselves attest. In the 2013 edition, Dorianne Laux comments on her “Song,” “Death permeates the poem, which wasn’t apparent to me until I was asked to write this paragraph.”

* * *

It used to be the death of God that got all the attention—God whose decomposing corpse made the big stink. Was it, in the end, Nietzsche, Freud, Time magazine, or the masses (who preferred, in the end, other opiates) that did Him in? I can’t say, but I take solace in knowing that there are, besides myself, other holdouts refusing to suspend their belief. Meanwhile, the subject has receded to the terrorism and fundamentalism pages of your newspaper, and the focus has long since shifted to literature. The death of the novel worried all-star committees for years. There was a split decision that satisfied no one, and now, with Updike dead and Roth retired, a new consensus is starting to form around the notion that the TV serial as exemplified by The Sopranos, Mad Men, Breaking Bad, House of Cards, and Homeland has supplanted not only the novel but the movie as a mass entertainment form—one that can aspire to be both wildly popular and notably artistic, as the novel was at its best. The past tense in that last clause makes me sad, though I have seen the future and it is even more enthralling than Galsworthy’s Forsyte Saga given the Masterpiece Theatre treatment with Damian Lewis as Soames.

As to poetry, is it dead, does it matter, is there too much of it, does anyone anywhere buy books of poetry? The discussion is fraught with anxiety and perhaps that implies a love of poetry, and a longing for it, and a fear that we may be in danger of losing it if we do not take care to promote it, teach it well, and help it reach the reader whose life depends on it. Will magazine editors continue to fall for a pitch lamenting that poetry has become a “small-time game,” that it is “too hermetic,” or “programmatically obscure,” lacking ambition and public spiritedness? The lack of originality is no bar. Think of how many issues of finance magazines are identical in their contents year after year. Retire at sixty-five. Insider tips from the pros. What to do about bonds in 2014 “and beyond.” Why it makes dollars and sense to “ditch cable.” Or consider the general audience magazine, editors of which will not soon tire of running articles that contend that a woman today either can or cannot “have it all.” I am so sure that death-of-poetry pronouncements will continue to be made that I am tempted to assign the task as a writing exercise. It’s an evergreen.

1. Kathryn Schulz, “Seduced by Twitter,” The Week, December 27, 2013, pp. 40–41.

2. Katy Steinmetz, “The Linguist’s Mother Lode,” Time, September 9, 2013, pp. 56–57. Jacob Eisenstein, a computational linguist at Georgia Tech, is quoted: “Social media has taken the informal peer-to-peer interaction that might have been almost exclusively spoken and put it in a written form. The result of that is a burst of creativity.” The assumption here is that the new is necessarily “creative” in the honorific sense.

3. “Even the best computer will seem positively geriatric by its fifth birthday.” Geoffrey A. Fowler, “Mac Pro Is a Lamborghini, but Who Drives That Fast?” The Wall Street Journal, January 15, 2014, D1.

4. The 1959 Rede Lecture in four parts was published as The Two Cultures and the Scientific Revolution. An expanded version conjoining the lecture with Snow’s subsequent reflections (A Second Look) appeared from Cambridge University Press in 1964.

5. I take the term “humanist” to cover historians and philosophers, literary and cultural critics, music and art historians, professors of English or Romance Languages or comparative literature or East Asian studies, classicists, linguists, jurists and legal scholars, public intellectuals, authors and essayists, most psychologists, and a great many other academics across the board: very nearly everyone not committed professionally to a career in one of the sciences or in technology.

6. The Two Cultures? The Significance of C. P. Snow by F. R Leavis.

7. For more on the affair, and an especially sensitive and sympathetic reading of Leavis’s “relentlessly withering” attack on Snow, see Stefan Collini, “Leavis v. Snow: The Two-Cultures Bust-Up 50 Years On,” in The Guardian, August 16, 2013. http://www.theguardian.com/books/2013/aug/16/leavis-snow-two-cultures-bust

8. Douglas Belkin, “Private Colleges Squeezed,” The Wall Street Journal, November 10, 2013.

9. “Major Changes,” Yale Alumni Magazine, January/February 2014, p. 20.

10. Russell A. Berman, “Humanist: Heal Thyself,” The Chronicle of Higher Education, June 10, 2013. http://chronicle.com/blogs/conversation/2013/06/10/humanist-heal-thyself/

11. Thomas Frank, “Course Corrections,” Harper’s Magazine, October 2013, p. 10. The editorial writers of the New York Post begin a defense of the liberal arts with “the nightmare scenario for many parents of college students. Suzie comes home from her $50,000-a-year university to tell you this: ‘Mom and Dad, I’ve decided I want to major in early Renaissance poetry.’?” New York Post, February 22, 2014.

12. Lionel Trilling, “The Two Environments: Reflections on the Study of English,” in Beyond Culture (1965; rpt. New York: Harcourt Brace Jovanovich, 1978), p. 184. See also Trilling’s lucid account of the Leavis–Snow controversy in the same volume, pp. 126–54.

13. “In according the least legitimacy to the word ‘genius,’ one is considered to sign one’s resignation from all fields of knowledge,” Jacques Derrida said in 2003. The very noun, he said, “makes us squirm.” At the same time that academics banished the word, magazines such as Time and Esquire began to dumb it down, applying “genius” to all manner of folk, including fashion designers, corporate executives, performers, comedians, talk-show hosts, and even point guards who shoot too much (Allen Iverson, circa 2000). See Darrin M. McMahon, “Where Have All the Geniuses Gone?” in The Chronicle Review, October 21, 2013. http://chronicle.com/article/Where-Have-All-the-Geniuses/142353/

14. Heather Mac Donald, “The Humanities Have Forgotten Their Humanity,” The Wall Street Journal, January 3, 2014. http://online.wsj.com/news/articles/SB10001424052702304858104579264321265378790

15. Leon Wieseltier, “Perhaps Culture Is Now the Counterculture,” The New Republic, May 28, 2013. http://www.newrepublic.com/article/113299/leon-wieseltier-commencement-speech-brandeis-university-2013

16. David Orr, “The Best American Poetry 2004: You, Too, Could Write a Poem.” The New York Times Book Review, November 21, 2004.

17. Madeline Schwartzman, an adjunct professor of architecture at Barnard College, is stopping someone on the subway every day and asking the person to write a poem on the spot for “365 Day Subway: Poems by New Yorkers.” Heidi Mitchell, “Artist Solicits Poetry from Other Subterraneans,” The Wall Street Journal, February 1–2, 2014, A15.

18. Byron, “Detached Thoughts” (October 1821 to May 1822), in Byron’s Poetry, ed. Frank D. McConnell (W. W. Norton, 1978), p. 335.

19. Whitman in “A Backward Glance O’er Travel’d Roads” (1888), in Walt Whitman: A Critical Anthology, ed. Francis Murphy (Penguin, 1969), pp. 110–11.

20. Eliot, The Sacred Wood (1920; rpt. Methuen, 1960), p. xv.

21. Harper’s Magazine, July 2013, pp. 61–68.

22. Harper’s Magazine, September 2013, pp. 2–3.

Product Details

- Publisher: Scribner (September 9, 2014)

- Length: 240 pages

- ISBN13: 9781476708157

Browse Related Books

Raves and Reviews

"A 'best' anthology that really lives up to its title."

-- Chicago Tribune

Resources and Downloads

High Resolution Images

- Book Cover Image (jpg): The Best American Poetry 2014 Hardcover 9781476708157(0.9 MB)